There’s change a-happening in the Florida auto insurance market.

Auto insurance is expensive for Floridians. The reason is that they file a lot of expensive claims, more than most. Floridians do this because… well that’s what I’ve been thinking a lot about lately.

First I’ll misquote Bastiat:

Claims Fraud is the great fiction through which everybody endeavors to live at the expense of everybody else.

Ok, let’s swipe some graphs from the indispensable III to illustrate the problem (source here and here).

Exhibit A:

So there’s a problem with auto insurance. Got it. Why?

Well, Florida is a No-Fault state, which means that beneath a certain threshold ($10,000 in this case) you claim on your own insurance policy when you get in an accident regardless of who hit whom. Everyone is pretty focused on the No-Fault aspect of the problem.

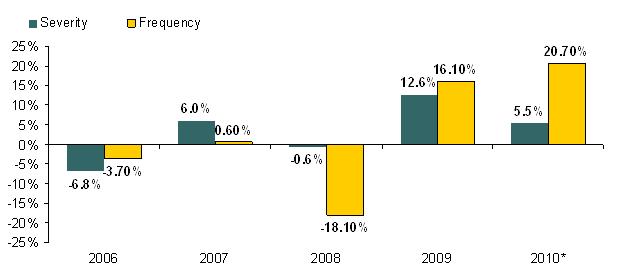

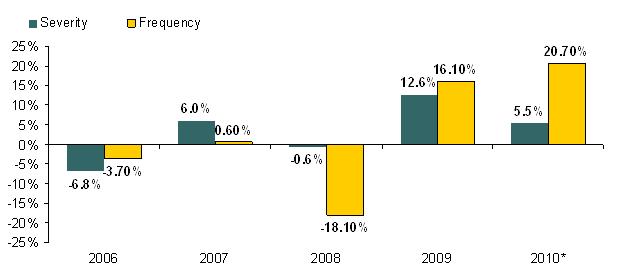

And there’s evidence of a problem. Here’s a graph detailing the growth in claims frequency and severity for No-Fault in Florida:

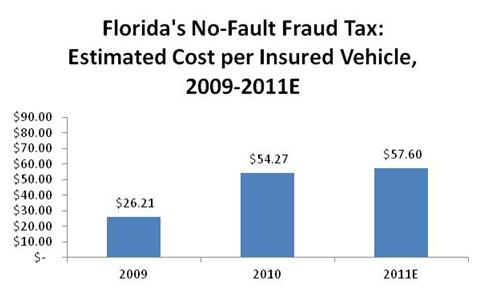

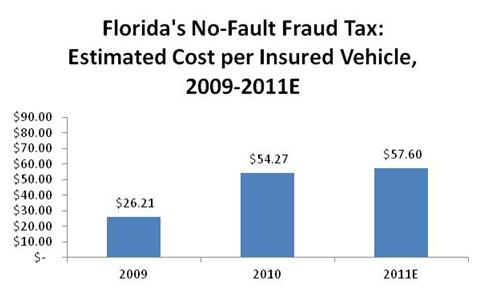

And newspapers have been going bananas down in FL, decrying the Florida No-Fault “Fraud Tax”. Catchy, non?

I’m not completely sure what a “Fraud Tax” is (I haven’t found any published methodology for calculating it anywhere), but here is the III’s view:

The combined impact of rising frequency and severity of claims is driving up the cost of pure premium, which is defined as the premium needed to pay for anticipated losses without considering other costs of doing business. The only reasonable explanation for this dramatic rise: no-fault fraud and abuse.

Even given that you accept that claims frequency and severity are increasing in Florida, I’d say that’s a pretty weak assertion.

They get stronger as the report continues.

Insurers also report suspected fraud to the National Insurance Crime Bureau (NICB), an insurer-funded, nonprofit organization of more than 1,000 members, including property/casualty insurers. The NICB is the nation’s leading organization dedicated to preventing, detecting and defeating insurance fraud and vehicle theft. The NICB gives a closer review to claims that are considered questionable and investigates them based on one or more indicators of possible fraud. A single auto insurance claim may be referred to the NICB for several reasons, and these “questionable claims” are flagged because they possess indicators of:

- Staged accidents

- Excessive medical treatment

- Faked or exaggerated injury

- Prior injuries (that are unreported in the new claim) Insurance Information Institute

- Bills for service not rendered

- Solicitation of the accident victim(s)

A single claim may contain several referral reasons. Questionable claims involving staged accidents surged 52 percent in 2009. For 2010, early estimates suggest an even larger increase.

And the kicker is this graph:

No fault is increasing quite a lot. But EVERYTHING is increasing, isn’t it? And how about #2, there, Bodily Injury? Well Bodily Injury is actually where the story is, in my mind. That’s the At-Fault coverage that extends above the $10,000 cap on No-Fault. You need to go to court and sue people and stuff for that.

What’s more, the Bodily Injury insurance market is 2.5x the size of No-Fault in Florida. BI is the 800 pound gorilla. Why isn’t anyone talking about it if it’s in the dumps, too?

Chris Tidball has an interesting analysis (and is now on the blogroll!):

The problem in Florida is substantial. First, the threshold for determining whether a party may sue has been watered down by the courts over the years, meaning that virtually any injury, irrespective of how minor it actually is, can be adjudicated, even if the true interpretation of the tort threshold says otherwise.

Secondly, a person is able to sue for any percentage of damage for which they were not at fault. Even if a person is 99.9 percent at fault, they are able to sue for damages.

None of that is No-Fault and I’m not sure how it’s related to the organized staged accidents and No-Fault Fraud. Are we addressing the wrong problem?

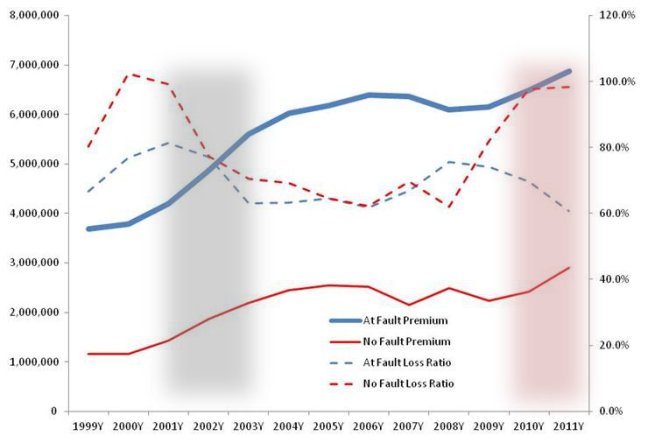

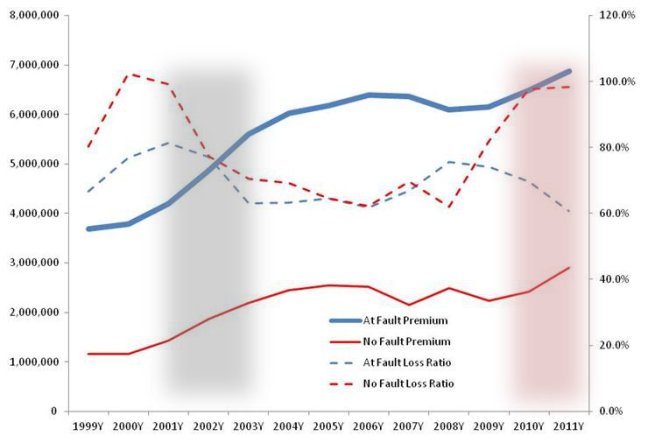

Ok, give me your hand and let’s walk slowly through this regrettably dense graph I put together with SNL data on the Florida market.

The solid lines are the written premium levels (left axis – see how much higher At-Fault is?) and the dotted lines are reported loss ratios (right axis):

Note that there is a bit of a basis mismatch in the data presented. The loss ratios are reported losses over earned premium while the premium is written premium, which is a more responsive indicator of market pricing.

The grey region is a classic market turn. Claims costs go up massively, insurers lose lots of money and premiums respond after a lag. It happened in No-Fault and it happened in At-Fault.

This time is different. No-Fault is playing that movie over again but At-Fault doesn’t seem to be, in spite of the increase in staged accidents noted above. What are we to make of this?

Some possibilities:

- The problem isn’t fraud, which appears to be affecting both No-Fault and At-Fault similarly without a similar impact on loss ratios;

- Fraud incidence is higher in At Fault but fraudsters are less successful when they need to go to court.

One observation on the graph above: In 2007, Florida’s No Fault law expired FL was At-Fault only for 3 months. But the loss ratio for that year was higher!

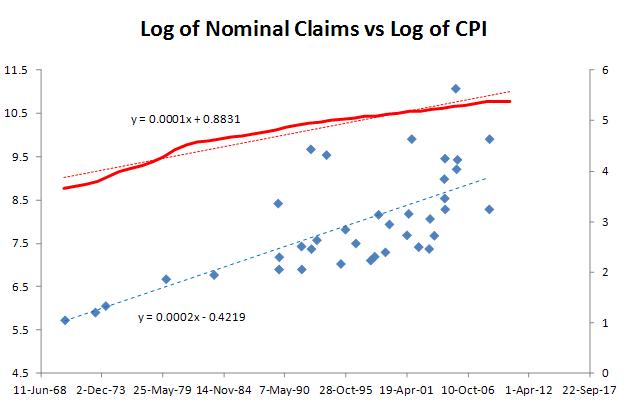

What’d I’d really like is to find a natural experiment in a state that modified its No-Fault laws. The only example I can see is in Colorado, which repealed its No-Fault system in 2003.

Here’s what happened:

At-Fault loss ratios dropped a bit immediately, but the drop persists!

Remember the purpose of No-Fault was to lower expenses. I’d imagine that claims expenses and overhead have also risen on the At-Fault book to deal with increase in smaller claims.

Does that mean that we should expect a higher expense ratio on the At-Fault FL book once No-Fault reform comes into play? That would suggest an advantage to carriers with efficient back offices…