Image compression.

In the Machine Learning class, we just learned the k-means clustering technique. Sounds complicated but, as always, the jargon is harder to learn than the concepts in computer science and math.

The idea here is that you express each color in an image as a combination of red, green and blue. Now you can plot each of the colors on a 3d graph so that similar colors will be plotted near each other. Dark red and light red will all be in the high-red, low green, low blue part of the graph.

Now pick a number of colors that you want to compress the image to. I’ve picked 16.

Nett you randomly drop 16 points into that plot of all the colors used. Each of the colors used will be close (in 3d space) to one of the dropped 16.

Here comes the clever part.

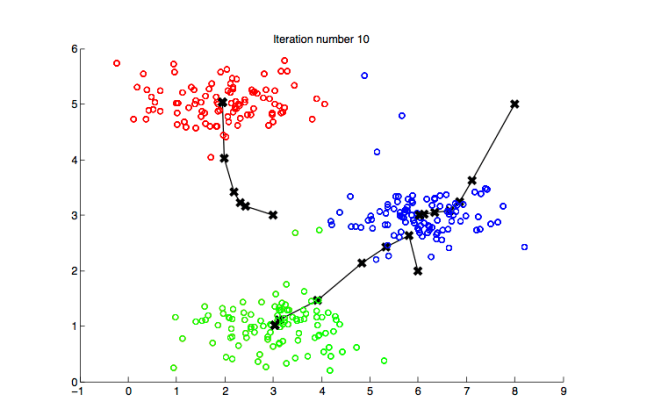

Now you have 16 groups of colors based on their closest random point. Find the mid-point (or average, or whatever you want to call it) of the colors and move the one of 16 to that mid point, then recalculate. Here is an image of it working in 2d space.

Eventually you wind up grouping all of the original colors into ‘likeness’ groups. The midpoint of this likeness group is your new color and the imagine is compressed!

Here’s an imagine of my dog Max I just compressed in this fashion.